Vv: Ihr Wettbüro-Spezialist

Sportwetten sind für viele Menschen auf der ganzen Welt ein beliebter Zeitvertreib geworden. Und mit dem Aufkommen der Online-Wetten ist es sogar noch einfacher geworden, auf die Lieblingsmannschaft oder den Lieblingsspieler zu wetten. Aber was genau ist ein Sportwettenvermittler?

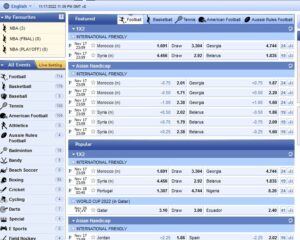

Ein Sportwettenvermittler ist ein Mittelsmann, der Ihnen hilft, Ihre Wette bei einem Wettanbieter zu platzieren. Broker arbeiten in der Regel mit mehreren asiatischen Wettanbietern zusammen und können Ihnen dabei helfen, die besten Quoten für das Spiel zu finden, auf das Sie wetten möchten. Sie können Ihnen auch bei anderen Aspekten von Sportwetten helfen, z. B. bei der Wahl einer Wettstrategie oder bei der Verwaltung Ihrer Bankroll.

Wenn Sie eine Wette auf ein bestimmtes Spiel platzieren möchten, kann ein asiatischer Wettanbieter eine wertvolle Hilfe sein. Er kann Ihnen helfen, die besten Quoten zu finden und dafür sorgen, dass Sie das Beste aus Ihren Sportwetten herausholen.

Warum sollten Sie einen Wettvermittler nutzen?

Es gibt viele Gründe, warum Sie einen Wettvermittler in Anspruch nehmen sollten. Ein Wettvermittler kann Ihnen Zeit und Geld sparen, indem er die besten Quoten für Sie findet. Er kann Sie auch beraten, worauf Sie wetten sollten und wie Sie Ihr Geld verwalten können. Wettmakler sind Experten auf dem Gebiet des Glücksspiels und können Ihnen helfen, das Beste aus Ihrem Geld zu machen.

Wenn Sie mit dem Gedanken spielen, einen Wettvermittler zu beauftragen, sollten Sie ein paar Dinge beachten. Erstens verlangen Wettvermittler in der Regel eine Provision für ihre Dienste. Diese Provision ist in der Regel ein Prozentsatz des von Ihnen gesetzten Betrags. Zweitens sollten Sie nur dann einen Wettvermittler in Anspruch nehmen, wenn es Ihnen mit dem Glücksspiel ernst ist. Wettvermittler können Ihnen eine Menge Ratschläge geben, aber sie können Ihnen auch Ihr Geld wegnehmen, wenn Sie nicht vorsichtig sind.

- Ein bet broker kann Ihnen helfen, Wetten auf eine Vielzahl verschiedener Sportereignisse zu platzieren.

- Ein Sportwetten-Broker kann Ihnen bei der Verwaltung Ihres Wettguthabens helfen.

- Ein Sportwettenvermittler kann Ihnen Ratschläge geben, wie Sie Ihr Geld am besten einsetzen.

- Ein bet agent kann Ihnen wertvolle Einblicke in die Welt der Wetten geben.

- Ein bet brokerage kann Ihnen helfen, mit anderen Wettenden in Kontakt zu treten und eine Wettgemeinschaft zu bilden.

Vorteile:

- Bessere Quoten: Wettbroker haben oft Zugang zu besseren Quoten als traditionelle Wettanbieter. Das liegt daran, dass sie nicht denselben Beschränkungen unterliegen wie traditionelle Wettanbieter.

- Höchste Limits: Sie können ohne Einschränkung große Summen setzen.

- Breiteres Spektrum an Ereignissen: Wie bereits erwähnt, decken Wettbroker ein breites Spektrum an Ereignissen ab. Das bedeutet, dass Sie Wetten auf eine Vielzahl von Sportarten und Ereignissen platzieren können.

- Geringeres Risiko: Wetten mit einem Broker können das Risiko, Geld zu verlieren, verringern. Das liegt daran, dass Sie Ihre Wetten auf verschiedene Ereignisse und mehrere Wetten Anbieter verteilen können, was die Gefahr verringert, dass Sie Ihr gesamtes Guthaben bei einer einzigen Wette verlieren.

- Wetten per Skype: Für Vielwettende bieten die Broker die Möglichkeit, Wetten direkt über Skype oder Telegram abzuschließen.

Benachteiligungen:

- Wettvermittler nehmen eine kleine Gebühr.

- Möglicherweise erhalten Sie Ihren Gewinn nicht so schnell, wie Sie ihn erhalten würden, wenn Sie die Wette selbst abgeschlossen hätten.

- Wettmakler sind möglicherweise nicht in allen Ländern verfügbar.

Wie funktioniert ein Sportmakler?

Ein Wettvermittler ist ein Mittelsmann, der Wettende mit Wettanbietern zusammenbringt. Wettvermittler ermöglichen es den Wettenden, Wetten bei mehreren Wettanbietern zu platzieren, ohne dass sie bei jedem einzelnen ein eigenes Konto eröffnen müssen. Dies kann aus einer Reihe von Gründen von Vorteil sein. Zunächst einmal kann es schwierig sein, ein Konto bei einem Wetten Anbieter zu eröffnen, wenn Sie in einem Land leben, in dem Online-Glücksspiele eingeschränkt sind. Wettvermittler können Ihnen helfen, diese Beschränkungen zu umgehen, indem sie Ihre Wette für Sie platzieren. Wettvermittler können Ihnen auch helfen, bessere Quoten für Ihre Wetten zu erhalten. Indem er sich bei mehreren Wettanbietern umschaut, kann ein Wettvermittler die besten Quoten für Ihre Wette finden und Ihnen helfen, das meiste für Ihr Geld zu bekommen.

Welches sind die besten Wettvermittlungsdienste?

Meiner Meinung nach, nach jahrelanger Praxis, ist dies meine Auswahl:

1. BetInAsia

Betinasia ist ein führender Wettbroker, der seinen Kunden eine breite Palette von Dienstleistungen anbietet. Sie bieten eine einfache und leicht zu bedienende Plattform, die Online-Wetten bequem und unterhaltsam macht. Sie bieten auch eine breite Palette von Märkten, wettbewerbsfähige Quoten und eine sichere und sichere Umgebung für alle unsere Kunden.

Ihr Angebot ist in zwei Teile gegliedert:

- Eine spezielle Software (basierend auf Mollybet), um die besten Quoten in Echtzeit bei den besten asiatischen Wettanbietern zu finden. Dieses Angebot wird BLACK genannt.

- Zugang zum betfair-Wettmarkt, mit niedrigeren Gebühren. Dieses Angebot nennt sich ORBIT EXCHANGE

BetInAsia ist ein sehr professioneller Partner, auf den Sie sich verlassen können. Sie haben zahlreiche Zahlungsoptionen und ich konnte immer abheben. Die erste Abhebung im Monat ist kostenlos.

Weitere Details zum BetInAsia-Angebot finden Sie hier.

2. AsianConnect

AsianConnect ist ein vertrauenswürdiger Broker für Online-Wetten und asiatische Sportarten, der eine sichere Möglichkeit bietet, Wetten auf Ihre Lieblingssportarten und -spiele zu platzieren. Sie bieten Zugang zu einer breiten Palette von Online-Wetten und Gaming-Websites, und unser erfahrenes Team ist auf der Hand, um Ihnen zu helfen, die besten Quoten und Preise zu finden. Sie bieten auch eine Reihe von Funktionen und Dienstleistungen an, die Ihnen helfen, das Beste aus Ihren Wetten herauszuholen, einschließlich Live-Streaming, In-Play-Wetten und Cash-Out.

Ihr Angebot lässt sich wie folgt zusammenfassen:

- Zugang zu den wichtigsten asiatischen Wettanbietern an einem Ort (einschließlich Pinnacle) und die Möglichkeit, ihr spezielles Tool ASIANODDS für Echtzeitwetten zu besseren Quoten zu nutzen,

- Zugang zu ORBITX & PIWI für Wettbörsen

AsianConnect ist der älteste Broker, der noch im Geschäft ist. Diesem Anbieter kann man trauen. Ich habe meine Zahlungen immer erhalten, aber die Auszahlungsmöglichkeiten sind begrenzter als bei anderen Brokern.

Weitere Einzelheiten zum AsianConnect-Angebot finden Sie hier.

3. SportMarket

SportMarker bietet wettbewerbsfähige Quoten für eine breite Palette von Sportwetten. Dies bedeutet, dass Sie den besten Wert für Ihre Wetten erhalten können, wenn Sie SportMarker verwenden. Schließlich bietet SportMarker eine breite Palette von Zahlungs- und Auszahlungsmethoden, so dass es einfach für Sie, Ihre Gewinne zu bekommen.

Bei Sportmarket werden fast die gleichen Dienstleistungen wie bei den vorherigen Anbietern angeboten, mit einer speziellen Sportmarket PRO Wettplattform, die auf Mollybet basiert. Die Zahlungsmöglichkeiten sind zahlreich, ohne Einzahlungsgebühr und mit einer kostenlosen Auszahlung pro Monat. Dieses Angebot richtet sich jedoch eher an professionelle Wettende mit einer Mindesteinzahlung von 100 € und die Provisionen für Wettbörsen sind etwas höher als bei anderen Anbietern. Aber sie gewähren Zugang zu Pinnacle, BetDAq, SBObet, betfair, BetISN, Matchbook, SingBet, Smarkerts…

Weitere Details zum SportMarket-Angebot finden Sie hier.

Ist die Inanspruchnahme eines Wettbüros teuer?

Wettvermittler sind Personen, die eine Provision verdienen, indem sie Wetten zwischen Wettern (Personen, die Wetten abschließen) und Wettanbietern (Unternehmen, die Wetten annehmen) organisieren.

Die Funktionsweise besteht darin, dass der Wettvermittler jemanden findet, der eine Wette auf ein bestimmtes Ergebnis abschließen möchte, und dann einen Wettanbieter findet, der bereit ist, diese Wette anzunehmen. Der Wettvermittler nimmt dann einen Teil des Gewinns aus der Wette als Provision.

Ist die Nutzung eines Wettvermittlers legal?

Solche Vermittler werden nicht als Sportwettenanbieter betrachtet. Sie unterliegen also nicht den lokalen Sportwettengesetzen und benötigen keine spezielle Vereinbarung. Sie verfügen jedoch alle über eine Wettlizenz für Curaçao.

Da Sie als Wettender jedoch nicht direkt eine Wette abschließen (sondern der Vermittler), gelten Sie nicht als Wettanbieter und unterliegen somit nicht den lokalen Sportwettgesetzen.

Die neuesten Techniken, die Sie kennen sollten:

- Top 8 der besten asiatischen Wettanbieter

- Wie kann man die Provision von betfair exchange reduzieren?

- Wie können Sie Ihr Wettgeld an einem Ort verwalten?

- Was sind die Lösungen für Sportwetten in Kryptowährungen?

- Telegram-Wetten: Zugang zum Profi-Wett-Tool

- Skype-Wetten: Ist das nur etwas für High-Roller?